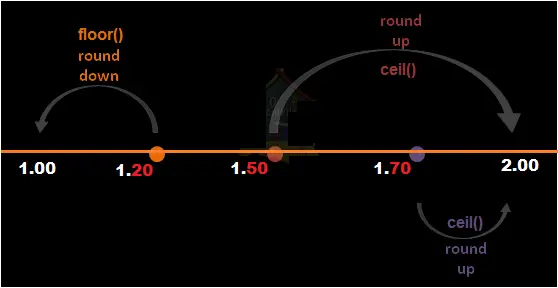

Round up or ceil in pyspark uses ceil() function which rounds up the column in pyspark. Round down or floor in pyspark uses floor() function which rounds down the column in pyspark. Round off the column is accomplished by round() function. Let’s see an example of each.

- Round up or Ceil in pyspark using ceil() function

- Round down or floor in pyspark using floor() function

- Round off the column in pyspark using round() function

- Round off to decimal places using round() function.

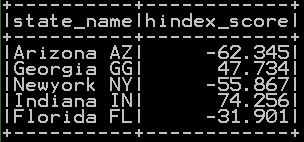

We will be using dataframe df_states

Round up or Ceil in pyspark using ceil() function

Syntax:

colname1 – Column name

ceil() Function takes up the column name as argument and rounds up the column and the resultant values are stored in the separate column as shown below

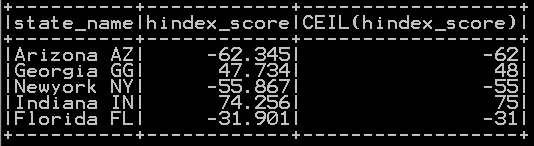

## Ceil or round up in pyspark

from pyspark.sql.functions import ceil, col

df_states.select("*", ceil(col('hindex_score'))).show()

So the resultant dataframe with ceil of “hindex_score” is shown below

Round down or Floor in pyspark using floor() function

Syntax:

colname1 – Column name

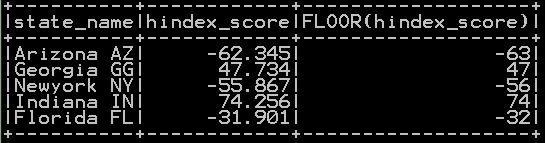

floor() Function in pyspark takes up the column name as argument and rounds down the column and the resultant values are stored in the separate column as shown below

## floor or round down in pyspark

from pyspark.sql.functions import floor, col

df_states.select("*", floor(col('hindex_score'))).show()

So the resultant dataframe with floor of “hindex_score” is shown below

Round off in pyspark using round() function

Syntax:

colname1 – Column name

n – round to n decimal places

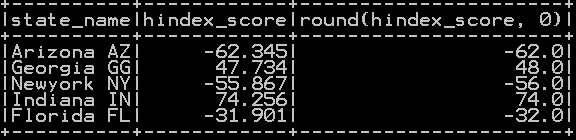

round() Function takes up the column name as argument and rounds the column to nearest integers and the resultant values are stored in the separate column as shown below

######### round off

from pyspark.sql.functions import round, col

df_states.select("*", round(col('hindex_score'))).show()

So the resultant dataframe with rounding off of “hindex_score” column is shown below

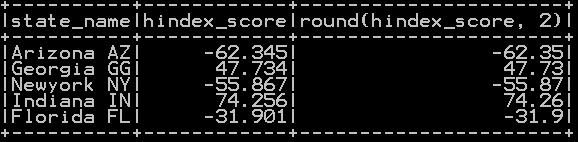

Round off to decimal places using round() function

round() Function takes up the column name and 2 as argument and rounds off the column to nearest two decimal place and the resultant values are stored in the separate column as shown below

########## round off to decimal places

from pyspark.sql.functions import round, col

df_states.select("*", round(col('hindex_score'),2)).show()

So the resultant dataframe with Rounding off of “hindex_score” column to 2 decimal places is shown below

Other Related Topics:

- Typecast Integer to Decimal and Integer to float in Pyspark

- Get number of rows and number of columns of dataframe in pyspark

- Extract Top N rows in pyspark – First N rows

- Absolute value of column in Pyspark – abs() function

- Set Difference in Pyspark – Difference of two dataframe

- Union and union all of two dataframe in pyspark (row bind)

- Intersect of two dataframe in pyspark (two or more)

- Sort the dataframe in pyspark – Sort on single column & Multiple column

- Drop rows in pyspark – drop rows with condition

- Distinct value of a column in pyspark

- Distinct value of dataframe in pyspark – drop duplicates

- Count of Missing (NaN,Na) and null values in Pyspark

- Mean, Variance and standard deviation of column in Pyspark

- Maximum or Minimum value of column in Pyspark

- Raised to power of column in pyspark – square, cube , square root and cube root in pyspark

- Drop column in pyspark – drop single & multiple columns

- Subset or Filter data with multiple conditions in pyspark

- Frequency table or cross table in pyspark – 2 way cross table

- Groupby functions in pyspark (Aggregate functions) – Groupby count, Groupby sum, Groupby mean, Groupby min and Groupby max

- Descriptive statistics or Summary Statistics of dataframe in pyspark

- Rearrange or reorder column in pyspark

- cumulative sum of column and group in pyspark

- Calculate Percentage and cumulative percentage of column in pyspark

- Select column in Pyspark (Select single & Multiple columns)